So long, CrashPlan! After using it for 5 years, CrashPlan with less than a day notice decided to delete many of my files I had backed up. Once again, the deal got altered. Deleting files with no advanced notice is something I might expect from a totalitarian leader, but it isn’t acceptable for a backup service.

CrashPlan used to be the best offering for backups by far, but those days are gone. I needed to find something else. To start with I noted my requirements for a backup solution:

- Fully Automated. I am not going to remember to do something like take a backup on a regular basis. Between the demands from all aspects of life I already have trouble doing the thousands of things I should already be doing and I don’t need another thing to remember.

- Should alert me on failure. If my backups start failing. I want to know. I don’t want to check on the status periodically.

- Efficient with bandwidth, time, and price.

- Protect against my backup threat model (below).

- Not Unlimited. I’m tired of “unlimited” backup providers like CrashPlan not being able to handle unlimited and going out of business or altering the deal. I either want to provide my own hardware or pay by the GB.

Backup Strategy

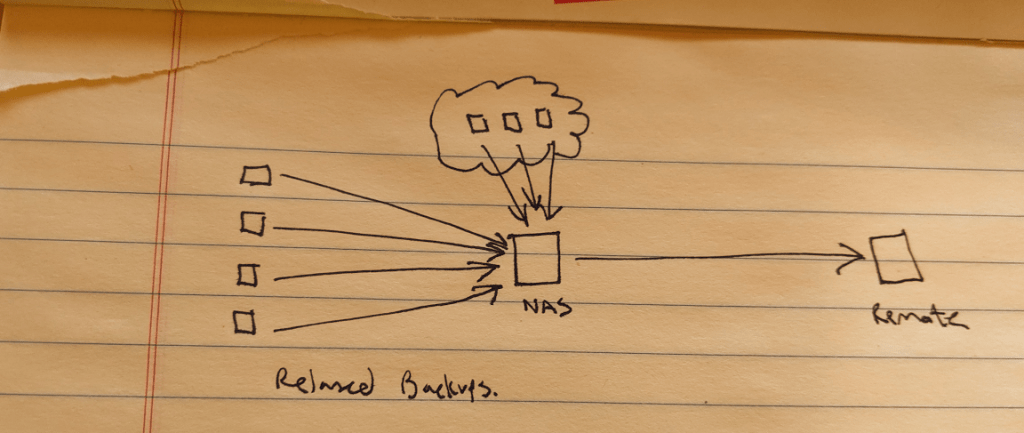

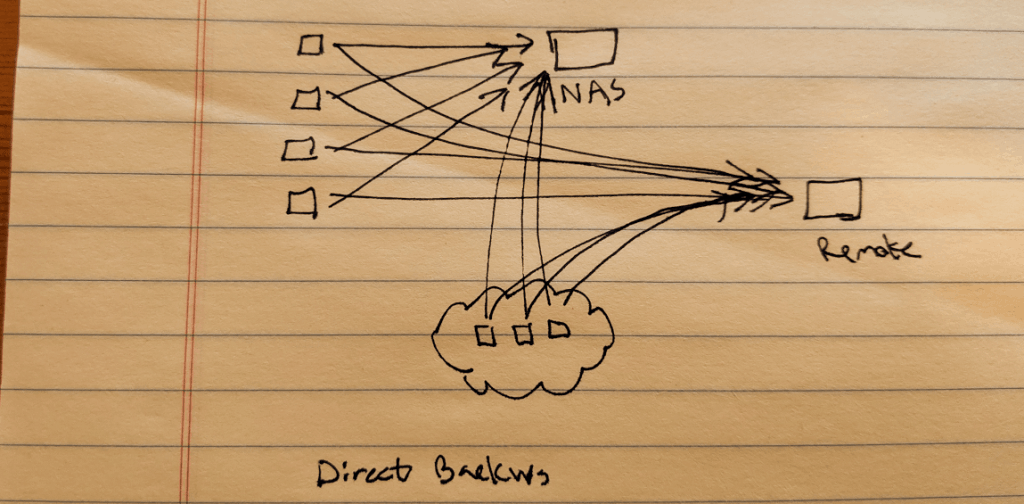

This also gave me a good opportunity to review my backup strategy. I had been using a strategy where all local and cloud devices backed up to a NAS on my network, and then those backups were relayed to a remote (formerly CrashPlan) backup service. The other model is a direct backup. I like this a little better because living in North Idaho I don’t have a good upload speed so in several cases I’ve been in situations where my remote backups from the NAS would never complete because I don’t have enough bandwidth to keep up.

Now if Ting could get permission to run fiber under the railroad tracks and to my house I’d have gigabit upload speed, but until then the less I have to upload from home the better.

Backup Threat Model

It’s best practice to think through all the threats you are protecting against. If you don’t do this exercise you may not think about something important… like keeping your only backup in the same location as your computer. My backup threat model (these are the threats which my backups should protect against):

- Disasters. If a fire sweeps through North Idaho burning every building but I somehow survive I want my data. So must have offsite backups in a different geo-location. We can assume that all keys and hardware tokens will be lost in a disaster so those must not be required to restore. At least one backup should be in a geographically separate area from me.

- Malware or ransomware. Must have an unavailable or offline backup.

- Physical theft or data leaks. Backups must be encrypted.

- Silent Data Corruption. Data integrity must be verified regularly and protected against bitrot.

- Time. I do not ever want to lose more than a days worth of work so backups must run on a daily basis and must not consume too much of my time maintaining them.

- Fast and easy targeted restores. I may need to recover an individual file I have accidentally deleted.

- Accidental Corruption. I may have a file corrupted or accidentally overwrite it and may not realize it until a week later or even a year alter. Therefore I need versioned backups to be able to restore a file from points in time up to several years.

- Complexity. If something were to happen to me, the workstation backups must be simple enough that Kris would be able to get to them. It’s okay if she has to call one of my tech friends for help, but it should be simple enough that they could figure it out.

- Non-payment of backup services. Backups must persist on their own in the event that I am unaware of failed payments or unable to pay for backups. If I’m traveling and my CC gets compromised I don’t want to not have backups.

- Bad backup software. The last thing you need is your backup software corrupting all your data because of some bug (I have seen this happen with rsync) so it should be stable. Looking at the git history I should be seeing minor fixes and infrequent releases instead of major rewrites and data corruption bug fixes.

My friend Meredith had contacted me about swapping backup storage. We’re geographically separated so that works to cover local disasters. So that’s what we did, each of us setup an SSH/SFTP server for the other to backup to. I had plenty of space on my Proxmox environment so I created a VM for him and put it in an isolated DMZ. He had a Raspberry Pi and bought a new 4TB western digital external USB drive that he setup at his house for me.

Duplicati Backup Solution for Workstations

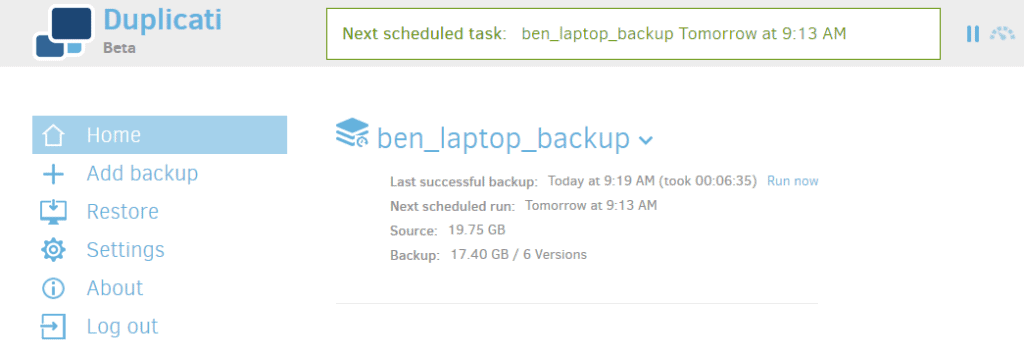

For Windows desktops I chose Duplicati 2. It also works with Mac, and Linux but for my purposes I just evaluated Windows.

Duplicati has a nice local web interface. It’s simple and easy to use. Adding a new backup job is simple and gives plenty of options for my backup sets and destinations (this allows me to backup not only to a remote SFTP server, but also to any cloud service such as Backblaze B2 or Amazon S3).

Duplicati 2 has status icons in the system tray that quickly indicate any issues. The first few runs I was seeing a red icon indicating the backup had an error. Looking at the log it was because I had left programs open locking files it was trying to back up. I like that it warns about this instead of silently not backing up files.

Green=In Progress, Grey=Paused, Black=Idle, Red=Error on the last backup.

Duplicati 2 seems to work well. I have tested restores and they come back pretty quickly. I can backup to my NAS as well as a remote server and a cloud server.

Two things I don’t care for Duplicati 2.

- It is still labeled Beta. That said it is a lot more stable than some GA software I’ve used.

- There are too many projects with similar names. Duplicati, Duplicity, Duplicacy. It’s hard to keep them straight.

Other considerations for workstation backups:

- rsync – no gui

- restic- no gui

- Borg backup – Windows not officially supported

- Duplicacy- License only allows personal

Restic Backup for Linux Servers

I settled on Restic for Linux servers. I have used Restic on several small projects over the years and it is a solid backup program. Once the environment variables are set it’s one command to backup or restore which can be run from cron.

It’s also easy to mount any point in time snapshot as a read-only filesystem.

Borg backup came in pretty close to Restic, the main reason I chose Restic is the support for backends other than sftp. The cheapest storage these days is object storage such as Backblaze B2 and Wasabi. If Meredith’s server goes down, with Borg backup I’d have to redo my backup strategy entirely. With restic I have the option to quickly add a new cloud backup target.

Looking at my threat model there are two potential issues with Restic:

- A compromised server would have access to delete it’s own backups. This can be mitigated by storing the backup on a VM that is backed by storage configured with periodic immutable ZFS snapshots.

- Because restic uses a push instead of a pull model, a compromised server would also have access to other server’s backups increasing the risk of data exfiltration. At the cost of some deduplication benefits this can be mitigated by setting up one backup repository per host, or at the very least by creating separate repos for groups of hosts. (e.g. a restic repo set for minecraft servers and separate restic repo for web servers).

Automating Restic Deployment

Obviously it would be ridiculous to configure 50 servers by hand. To automate I used two Ansible Galaxy roles. I created https://galaxy.ansible.com/ahnooie/generate_ssh_keys which automatically generates ssh keys and copies the key ids to the restic backup target. The second role https://galaxy.ansible.com/paulfantom/restic automatically installs and configures a restic job on each server to run from cron.

Utilizing the above roles here is the Ansible Playbook I used to configure restic backups across all my servers. This sets it up so that each server is backed up once a day at a random time:

---

- hosts: linux_group,!do_not_backup

roles:

- role: ahnooie.generate-ssh-keys

vars:

generate_ssh_keys_target_server: "resticbackups.example.com"

generate_ssh_keys_server_user: "resticuser"

- role: paulfantom.restic

vars:

restic_cron_mailto: "[email protected]"

restic_repos:

- name: backup01

url: sftp:[email protected]:/storage/resticbk

password: "{{ restic_repo_pass }}"

jobs:

- command: 'restic backup /etc /opt /home /var /srv /root --exclude /var/lib/lxcfs/cgroup'

at: '{{ 59 |random(seed=inventory_hostname) }} {{ 23 |random(seed=inventory_hostname) }} * * *'

user: resticuser

retention_time: '{{ 59 |random(seed=inventory_hostname_short ) }} {{ 23 |random(seed=inventory_hostname_short) }} * * {{ 6 |random(seed=inventory_hostname_short) }}'

retention:

last: 5

hourly: 4

daily: 14

weekly: 9

monthly: 4

yearly: 5

Manual Steps

I’ve minimized manual steps but some still must be performed:

- Backup to cold storage. This is archiving everything to an external hard drive and then leaving it offline. I do this manually once a year on world backup day and also after major events (e.g. doing taxes, taking awesome photos, etc.). This is my safety in case online backups get destroyed.

- Test restores. I do this once a year on world backup day.

- Verify backups are running. I have a reminder set to do this once a quarter. With Duplicati I can check in the web UI, and with a single Restic command it can get a list of hosts with the most recent backup date for each.

Cast your bread upon the waters,

for you will find it after many days.

Give a portion to seven,

or even to eight,

for you know not what disaster may happen on earth.

Solomon

Ecclesiastes 11:1-2 ESV

Hi Ben,

Did you consider Arq for windows backup? I’ve been using it in the new post-crashplan world, but I will check out duplicati now.

Hi, Gabe! No, I didn’t see that one. Arq looks like a good deal for multiple computers since the license is for unlimited computers and it includes a TB. I’ll have to keep that one in mind.

Note you can also use Arq without bundled storage and send your data wherever you like (S3, B2, Wasabi and SFTP are all supported)

I like arq, have it on all family computers, OS X and win; uploads to glacier, b2and sftp flawlessly.

Nice to see other people coming to almost the same conclusions as myself :)

I was seconds away from purchasing Arq (for windows) for backing up a windows computer when i found this post, and decided to give duplicati a try. I do use Arq on Mac, and it’s solid and has never let me down, but the user interface, especially for restoring is a bit clumsy.

I thought i’d add a few of my own observations:

I have no idea how much storage you’re backing up, but i evaluated Restic for my own needs, backing up ~14TB to two external 8TB drives, as well as a remote backup on an Odroid HC2 ($50 8 core/2GB Ram ARM board, with Ubuntu 16.04 and restic-rest server), and i found that Restic would more or less choke to death once i got to 4TB of backup storage in the same repository. Backups would take 3-4 days for the initial backup, subsequent backups would take 8-12 hours, and prune operations would take 23+ hours to complete.

I also evaluated Borgbackup, and the initial backup took about 28 hours, and subsequent backups would complete in minutes. Prune operations takes 30 minutes.

As for exposing network ports, my remote backup box dials back home via VPN (IKEv2/IPSEC), and backups are done to the VPN address, meaning i don’t have to open any ports on the remote router. I run on a decent netgate router, which can easily do 300 mbit/s with IPSEC, and backups usually run slower than that anyway :)

Thanks Jimmy, that’s great to know for larger backups! My backups are much smaller, in the 1-2TB range so I haven’t had that issue yet.

An other open source tool for backing up Windows, UrBackup also exists, I had less problems with that (although by default the software assumes the backup servers is on the same LAN, it can be configured to use an Internet backup server).

I’m also in the process of picking the backup sw. I’ve narrowed it down to Duplicati and Restic which led me to this page. :)

Could you write your thoughts on duplicati vs restic? Why is one better than the other, in what use-cases, performance etc.?

Why did you choose Duplicati for Windows and not for Linux?

My backup plan is similar to yours; local backup of two Linux computers (maybe one Windows) and sync to S3 Glacier Deep Archive.

Hi, Davor. My main reason has more to do with I wanted a user interface for my workstations (which are all Windows 10) and wanted CLI for my Linux servers since they’re all headless. On S3 Glacier you may want to look into whether Restic or Duplicati will even work with it. See: https://forum.restic.net/t/restic-and-s3-glacier-deep-archive/1551/4 Let me know what you end up going with.

Duplicati can also be used as a Linux service, while still using the web interface. Been using it for years, but I’m considering switching to Restic, though, because Duplicati tends to not work very well with interrupted backups, and requires manual intervention (database rebuilding) too often.