Playing with bhyve

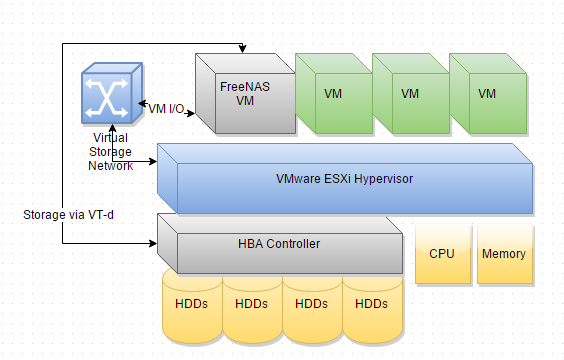

Here’s a look at Gea’s popular All-in-one design which allows VMware to run on top of ZFS on a single box using a virtual 10Gbe storage network. The design requires an HBA and a CPU that supports VT-d so that the storage can be passed directly to a guest VM running a ZFS server (such as OmniOS or FreeNAS). Then a virtual storage network is used to share the storage back to VMware.

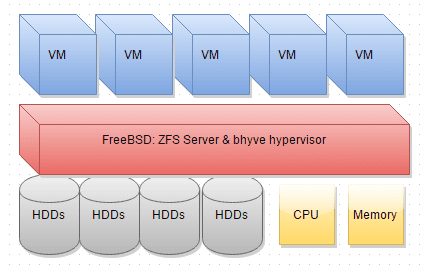

bhyve, can simplify this design since it runs under FreeBSD it already has a ZFS server. This not only simplifies the design, but it could potentially allow a hypervisor to run on simpler less expensive hardware. The same design in bhyve eliminates the need to use a dedicated HBA and a CPU that supports VT-d.

I’ve never understood the advantage of type-1 hypervisors (such as VMware and Xen) over Type-2 hypervisors (like KVM and bhyve). Type-1 proponents say the hypervisor runs on bare metal instead of an OS… I’m not sure how VMware isn’t considered an OS except that it is a purpose-built OS and probably smaller. It seems you could take a Linux distribution running KVM and take away features until at some point it becomes a Type-1 hypervisor. Which is all fine but it could actually be a disadvantage if you wanted some of those features (like ZFS). A type-2 hypervisor that supports ZFS appears to have a clear advantage (at least theoretically) over a type-1 for this type of setup.

In fact, FreeBSD may end up becoming the best all-in-one virtualization/storage platform. You get ZFS and bhyve, and also jails. You really only need to run bhyve when virtualizing a different OS.

bhyve is still pretty young, but I thought I’d run some tests to see where it’s at…

Environments

This is running on my X10SDV-F Datacenter in a Box Build.

In all environments the following parameters were used:

- Supermico X10SDV-F

- Xeon D-1540

- 32GB ECC DDR4 memory

- IBM ServerRaid M1015 flashed to IT mode.

- 4 x HGST Ultrastar 7K300 HGST 2TB enterprise drives in RAID-Z

- One DC S3700 100GB over-provisioned to 8GB used as the log device.

- No L2ARC.

- Compression = LZ4

- Sync = standard (unless specified).

- Guest (where tests are run): Ubuntu 14.04 LTS, 16GB, 4 cores, 1GB memory.

- OS defaults are left as is, I didn’t try to tweak the number of NFS servers, sd.conf, etc.

- My tests fit inside of ARC. I ran each test 5 times on each platform to warm up the ARC. The results are the average of the next 5 test runs.

- I only tested an Ubuntu guest because it’s the only distribution I run in (in quantity anyway) addition to FreeBSD, I suppose a more thorough test should include other operating systems.

The environments were setup as follows:

1 – VM under ESXi 6 using NFS storage from FreeNAS 9.3 VM via VT-d

- FreeNAS 9.3 installed under ESXi.

- FreeNAS is given 24GB memory.

- HBA is passed to it via VT-d.

- Storage shared with VMware via NFSv3, virtual storage network on VMXNET3.

- Ubuntu guest given VMware para-virtual drivers

2 – VM under ESXi 6 using NFS storage from OmniOS VM via VT-d

- OmniOS r151014 LTS installed under ESXi.

- OmniOS is given 24GB memory.

- HBA is passed to it via VT-d.

- Storage shared with VMware via NFSv3, virtual storage network on VMXNET3.

- Ubuntu guest is given VMware para-virtual drivers

3 – VM under FreeBSD bhyve

- bhyve running on FreeBSD 10.1-Release

- Guest storage is file image on ZFS dataset.

4 – VM under FreeBSD bhyve sync always

- bhyve running on FreeBSD 10.1-Release

- Guest storage is file image on ZFS dataset.

- Sync=always

Benchmark Results

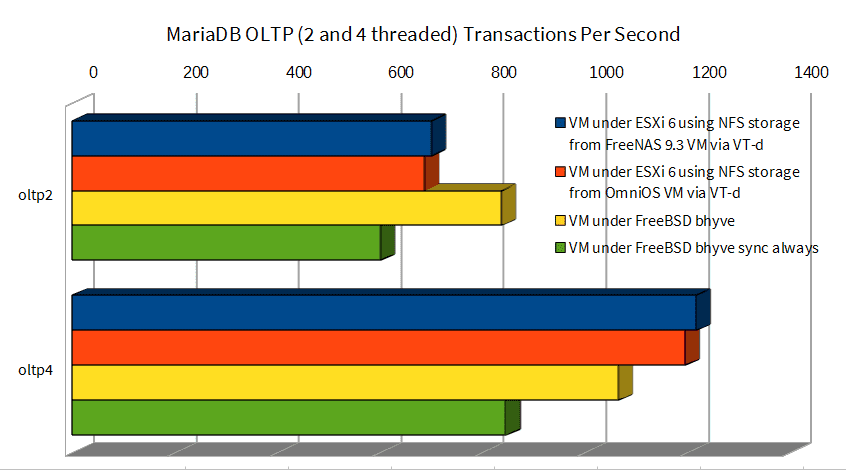

MariaDB OLTP Load

This test is a mix of CPU and storage I/O. bhyve (yellow) pulls ahead in the 2 threaded test, probably because it doesn’t have to issue a sync after each write. However, it falls behind on the 4 threaded test even with that advantage, probably because it isn’t as efficient at handling CPU processing as VMware (see next chart on finding primes).

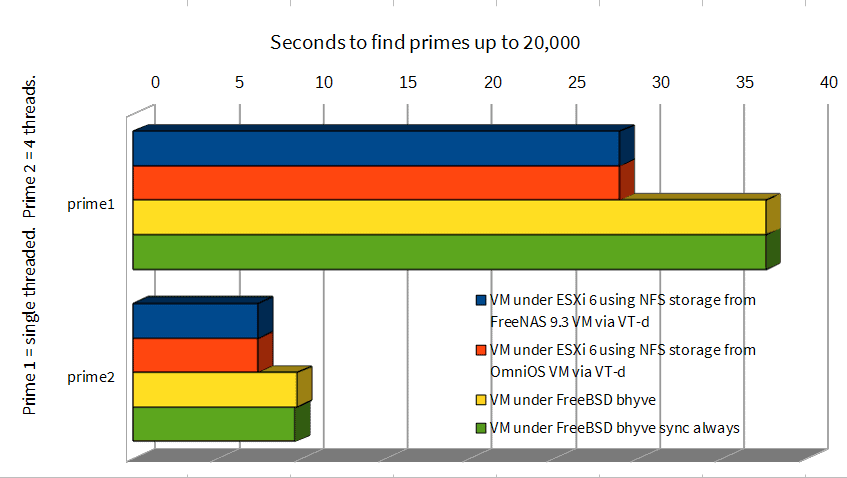

Finding Primes

Finding prime numbers with a VM under VMware is significantly faster than under bhyve.

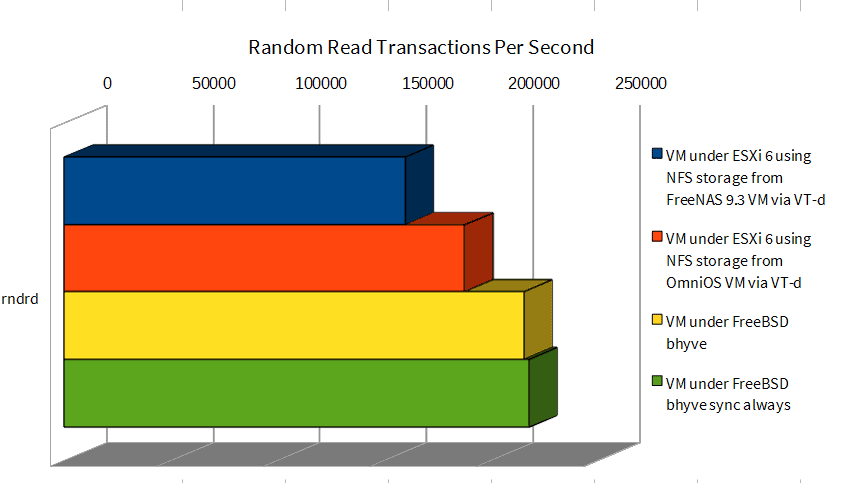

Random Read

byhve has an advantage, probably because it has direct access to ZFS.

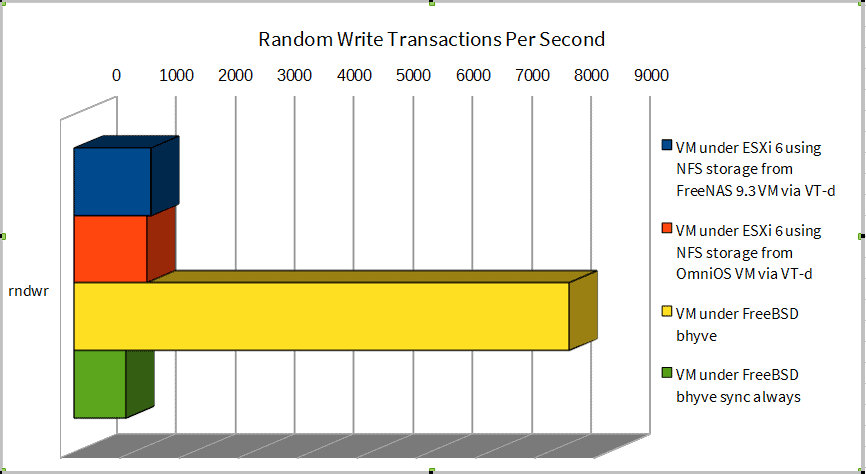

Random Write

With sync=standard bhyve has a clear advantage. I’m not sure why VMware can outperform bhyve sync=always. I am merely speculating but I wonder if VMware over NFS is translating smaller writes into larger blocks (maybe 64k or 128k) before sending them to the NFS server.

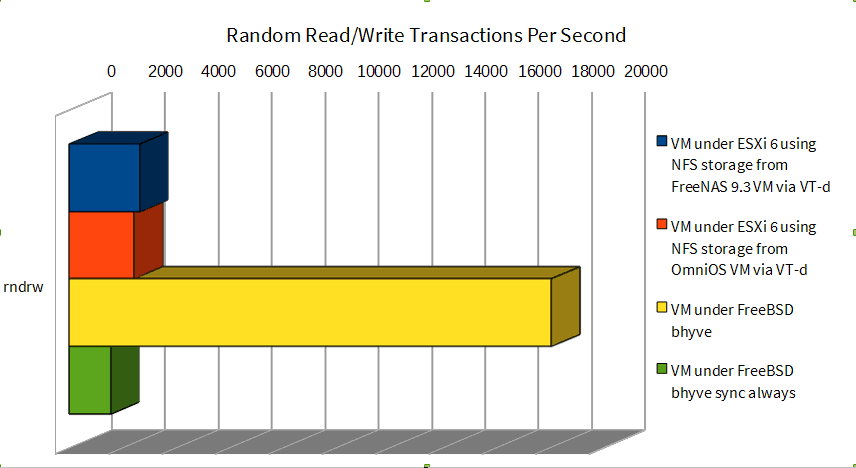

Random Read/Write

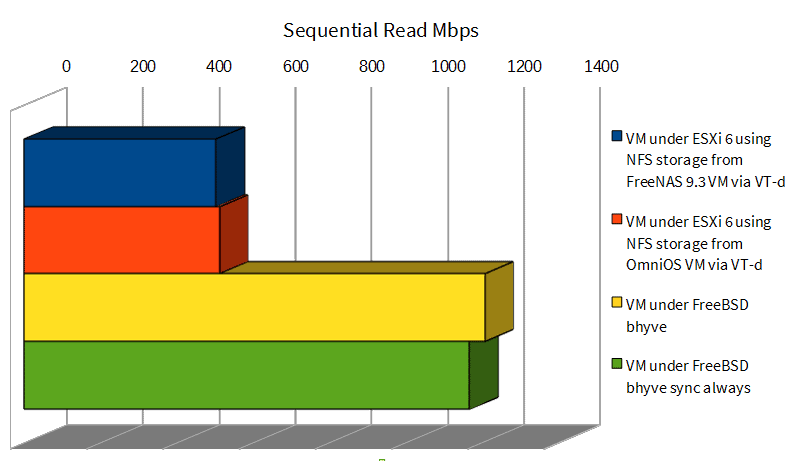

Sequential Read

Sequential reads are faster with bhyve’s direct storage access.

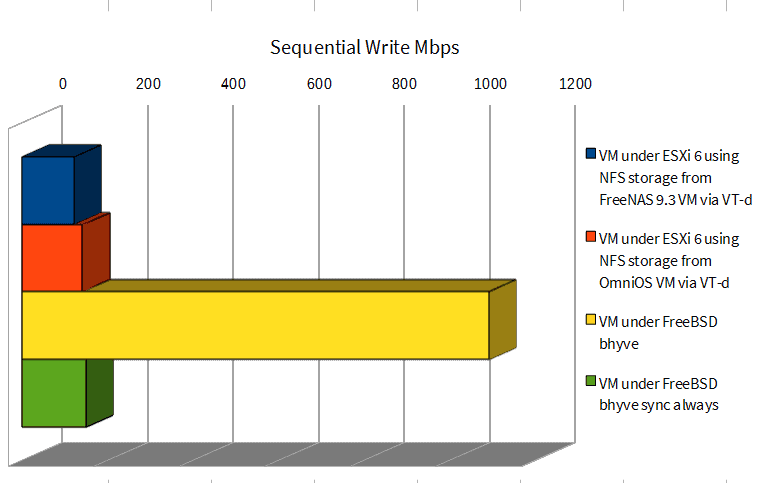

Sequential Write

What not having to sync every write will gain you..

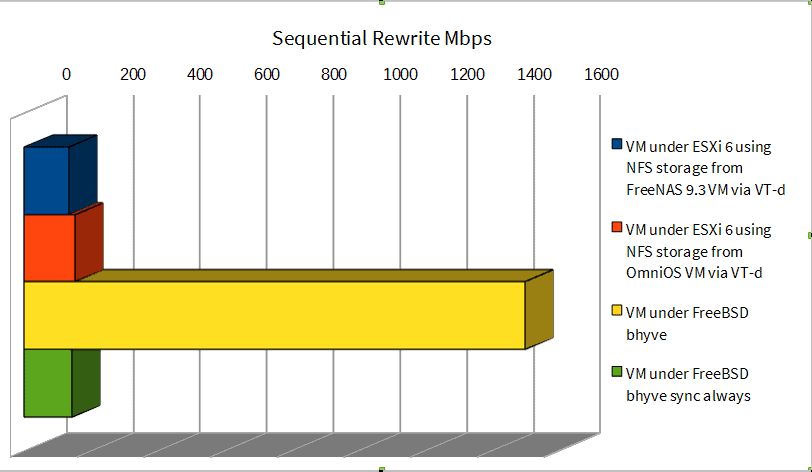

Sequential Rewrite

Summary

VMware is a very fine virtualization platform that’s been well-tuned. All that overhead of VT-d, virtual 10gbe switches for the storage network, VM storage over NFS, etc. are not hurting its performance except perhaps on sequential reads.

For as young as bhyve is I’m happy with the performance compared to VMware, it appears to be slower on the CPU intensive tests. I didn’t intend on comparing CPU performance so I haven’t done enough variety of tests to see what the difference is there but it appears VMware has an advantage.

One thing that is not clear to me is how safe running sync=standard is on bhyve. The ideal scenario would be honoring fsync requests from the guest, however, I’m not sure if bhyve has that kind of insight from the guest. Probably the worst case under this scenario with sync=standard is losing the last 5 seconds of writes–but even that risk can be mitigated with battery backup. With standard sync there’s a lot of performance to be gained over VMware with NFS. Even if you run bhyve with sync=always it does not perform badly, and even outperforms VMware All-in-one design on some tests.

The upcoming FreeNAS 10 may be an interesting hypervisor + storage platform, especially if it provides a GUI to manage bhyve.

VMWare ESXi is an enterprise platform used for Cloud and Datacenter virtualisation on a massive scale. You are comparing an enterprise hypervisor used in datacenters all around the world with a free non-enterprise hypervisor that can be installed on consumer hardware. I understand this review is just for the consumer market but it’s still comparing two solutions for two different problems.

You also use non-enterprise consumer hardware to do your review. Now, this review may just be for consumers who want to compare performance between different hypervisors on consumer hardware that use ZFS, but I’ve never come across ZFS in any corporations where they need sequential performance, since most transactions rely on random reads and writes, hence the need for large SAN’s with 15k RPM disks and SSDS for flash cache, etc. I would expect the review results to be quite different on the hardware VMWare ESXi is commonly used on, in fact having worked in corporations and worked on Rack mounted servers with fast SANs, I would say that with certainty.

How about comparing bhyve with Virtualbox, VMWare Workstation.player or maybe even Citrix Zenserver or Hyper-V for a head to head comparison with VMWare ESXi?

Also, while bhyve is classed as a type 2 hypervisor, like KVM (Kernel Virtual Machine), it’s a Kernel module for FreeBSD rather than a separate hypervisor layer underneath the OS, so it is still technically a type 1 hypervisor.

Hi, Harpinder. Yeah, this isn’t meant to be an enterprise level comparison. I’m using the free version of ESXi and specifically comparing it’s capability operating as an all-in-one solution where the storage and hypervisor are on the same box–which probably is only applicable to certain people running such configurations in their homelabs. For all I know I might be the only person in the world interested in the comparison I did. |:-)

I agree that bhyve isn’t widespread yet, but it will be enterprise capable once it’s matured. It will probably not ever see as much widespread use as VMware, and it probably won’t see use in corporations without FreeBSD expertise, but I’d be surprised if some FreeBSD shops such as Netflix or Groupon aren’t already testing it.

Virtualbox and VMware workstation are desktop solutions, they’re not in the same class… a comparison against Xen, Hyper-V, or KVM would be appropriate …but I’m not as interested in those platforms.

If ZFS isn’t an enterprise solution I don’t know what is! It was designed to solve the problem of silent data corruption for organizations processing petabytes of data. ZFS can certainly be configured for performance. Max out the memory for ARC (which will act as a read cache for the most frequent reads in the working set so it doesn’t have to hit data disks), use low latency HGSTs for log devices to cache random writes, fast SSDs for L2ARC to cache frequent reads that won’t fit into memory. For the data drives if you want maximum performance use all SSDs or 15K like you mentioned. The benchmarks here will be completely irrelevant for such configurations. My testing is with one guest running on top of a hypervisor–for the setups you’re thinking of you’d want to benchmark concurrent load from thousands of VMs simultaneously and like you said it could perform entirely differently under that scenario.

I found the comparisons interesting. They’re also not necessarily two different problems. Plenty of organisations fork over a truckload of money for VMWare ESXi licenses in addition to stringent requirements for hardware, networking and storage. Why? Do the benefits outweigh the open-source counterparts? Would it be worth scaling down VMWare to a handful of critical systems and running everything else on commodity hardware with commodity storage backed by open SDN? Are the performance gains really worth the admin overhead and cost implications? Not poking holes, just presenting a view from a place with a massive VMware investment, and competing interest from a KVM platform that is currently eyeing out its lunch.

Hi, Paul. When it comes to performance I haven’t done benchmarking between VMware and KVM, but my guess is performance is not as much of a factor as licensing costs verses admin overhead like you mentioned. Generally the best hypervisor is the one you are comfortable with and have battle tested.

VMware has a lot going for it, it does memory de-duplication across each hypervisor which may drive down hardware costs over KVM, high availability and fault tolerance can be setup quickly, and if you’re running Windows you don’t have to load virtio drivers on the guests. And pretty much everything works with little to no tweaking–admins can spend their time managing servers instead of managing the hypervisor. This makes up for a lot of the licensing costs in my book. VMware scales well… homelabs and mom and pop businesses can run the free hypervisor, VMware Essentials Plus works for up to three hosts for small businesses, and for medium to large businesses their enterprise licensing adds DRS, etc. I do see that hosting companies like Rackspace, Amazon, DigitalOcean, etc. tend to not use VMware. Probably at that scale or for that business model the licensing costs from VMware are high compared to the administration overhead.

The only difference between “enterprise” and “consumer” hardware is the marketing buzzwords. 20 yrs of data centers in telecom business taught me that much. Don’t take my word for it! Ask Google, Facebook, Netflix, Juniper and many other giants on the market.

Good point Daniel… I see “enterprise” as offering service on top of the hardware–for instance, if you buy a storage solution from EMC, NetApp or HP you’re mainly paying for them to design and implement the solution, monitor it for you, perform tuning and software updates, onsite support, etc. I typically think enterprise offerings are good when you can’t afford downtime or performance degradation, but you’re not large enough for it to be cost effective in hiring your own staff to manage a solution on hardware like Supermicro with the same level of service.

It would be interesting to see the same tests repeated with bhyve from coming soon FreeBSD 10.2 — it took number of performance improvements in network and storage drivers, that may change test results.

Thanks for the info Alexander, I’ll try to repeat the benchmark after 10.2 is released.

One thing that is not clear to me is how safe running sync=standard is on bhyve.

Might I suggest “not at all”?

The ideal scenario would be honoring fsync requests from the guest, however I’m not sure if bhyve has that kind of insight from the guest. Probably the worst case under this scenario with sync=standard is losing the last 5 seconds of writes

No, i don’t think so. If the host os might write out blocks in its bigger cache in any order — which I believe would be the case — then you could end up with inconsistent data on disk that the first OS believes it synced safely to avoid. That would result in corrupt database or other storage data.

It may be possible to get the kind of insight you suggest. Versatility the first OS had to propagate the fsync operations to is storage drivers via wire barriers or other types of sync operations. But the performance numbers you slow seen to indicate its not happening.

Sorry “buffer cache”

“First OS” -> “guest OS”

“Versatility” -> “certainly”

“Write barriers” “write barriers”

“Number you slow” -> “numbers you show ”

— phone keyboard

Hi, Greg. Thanks for bringing up that scenario–I hadn’t considered the risk of out of order writes. According to Matthew Ahrens ZFS write order is guaranteed to writes of a single file (which in this case would be the virtual drive file)–does that address your concern?

Of course best case scenario would be if bhyve honored fsync from the guest but I haven’t heard for certain one way or the other if that’s the case.

Cache flush in bhyve was improved recently. Now (10.2) it should work right. Unless guest OS can not call flush properly it should not be required to set sync=always. sync=always may be needed in case where storage subsystem is a separate host (physical or virtual, like FreeNAS on another VM/host), and that host may crash/reboot/etc independently from the guest OS, that may cause unnoticed data loss. In case of bhyve with storage running directly on host, storage crash means guest OS crash too, that is completely equivalent to situation of power-loss for normal hardware system with HDD and should be handled with usual guest OS dirty reboot procedures.

That’s good news Alexander. Just to clarify, you’re saying that if you’re running storage on a separate host, say a FreeNAS system, that the cache flush commands are not honored by iSCSI or NFS and therefore it’s safer to set sync=always on those systems? I’m guessing those are limitations of the iSCSI and NFS protocols?

FreeNAS honors cache flushes in all cases when they are sent. But the problem is that network storage requires different cache policy then local drive. Local drive has no right to loose any data in any case other then full power loss, and full power loss there there is rare and can never pass unnoticed for initiator. Same time NAS can loose power/crash/reboot independently from initiator, and in that case it may loose unflushed data.

NFS protocol, originally designed for unreliable networks, provides all means to track server reboots and to replay potentially lost requests if it happen. The only problem with NFS is that while FreeNAS correctly handles both sync and async semantics, VMware is too lazy to implement protocol recommendations for async writes, sending all requests as synchronous, that effectively behaves the way same as sync=always in ZFS.

With iSCSI the problem is different. iSCSI is just a different transport for the same SCSI protocol as used for local disks. Because of that none of initiators I know bother to support request replaying in case of target failure. So when initiator sends cache flush, it has no idea whether NAS cache still has written data in cache, or they were lost due to NAS reboot. I don’t know whether VMware propagates flushes from guest to target, but even if it does, in case of NAS it may be insufficient.

For local storage in case of bhyve cache flushes propagated from guest are sufficient for data consistency.

Thanks for the explanation, so it seems, at least under FreeBSD 10.2 that the local storage will work the way I want it to. I’m looking forward to the release.

> The only problem with NFS is that while FreeNAS correctly handles both sync and async semantics, VMware is too lazy to implement protocol recommendations for async writes, sending all requests as synchronous, that effectively behaves the way same as sync=always in ZFS.

Consider the same scenario with bhyve instead of

FreeNASVMware. If the bhyve host has an NFS share from FreeNAS mounted and stores a bhyve guest disk image on that share, and the guest requests a cache flush, is the bhyve host going to send that NFS write with a COMMIT/cache flush causing FreeNAS to handle it as a sync? (assuming the underling dataset has sync=standard).> Consider the same scenario with bhyve instead of FreeNAS VMware. If the bhyve host has an NFS share from FreeNAS mounted and stores a bhyve guest disk image on that share, and the guest requests a cache flush, is the bhyve host going to send that NFS write with a COMMIT/cache flush causing FreeNAS to handle it as a sync? (assuming the underling dataset has sync=standard).

Yes, it should. FreeBSD initiator unlike VMware’s supports both sync and async modes. It should initially send all writes as async, but request cache flushes every N megabytes and on guest OS request. In case of server crash FreeBSD client will retry requests.

Hi Ben; any follow-up testing performed on 10.2? I find this really interesting. Would also be curious if you could add Windows 2012 R2 (an evil I can’t avoid, but, if possible, I’d love to run it on bhyve/zfs instead of metal) to your testing.

Hi, Matt. I haven’t made time to start with 10.2 testing, I’m not entirely sure I’ll get to it. It will be nice when bhyve can run Windows with similar performance (maybe it’s already there?)

Out of interest, what was the primes benchmark (and parameters) ?

Hi, Peter…

For the single-threaded…

sysbench –test=cpu –cpu-max-prime=20000 –num-threads=1 run

And the four-threaded…

sysbench –test=cpu –cpu-max-prime=20000 –num-threads=4 run

As an alternative to bhyve you may consider Joyent SmartOS, which is based on Illumos. You get: ZFS, Zones, KVM, Dtrace..

It is field-proven as they use it to power their public cloud for years, with they opensource IaaS solution, SmartDatacenter.

You can run Illumos or Linux inside zones (ABI translation), and SmartDatacenter can present your cluster as an elastic docker host.

There is another opensource IaaS solution based on SmartOS: Project Fifo.

Thanks, Jim. I actually tried Project Fifo awhile back but it certainly wasn’t ready at the time, but it may be time to try it again. Last I looked at running KVM on a Solaris based OS it seems like there was a networking limitation where I had to have a physical NIC per VM (my memory is a little fuzzy about this), is that no longer the case?

Where are the sources for these tests? You seem to be quite experienced computer guy, so there is no doubt that you know about the fact that no test will be taken seriously until the exact testing procedure has been published / sources for the test are available. I did miss the link to the exact test description.

Here you go… I ran the script once without taking measurements to warm the ARC, then ran it again and took the results from the second run.

#!/bin/bash

# sysbench --test=fileio --file-total-size=6G prepare

# sysbench --test=oltp --oltp-table-size=1000000 --mysql-db=test --mysql-user=root --mysql-password=test prepare

rm result_rndrw

rm result_rndwr

rm result_oltp2

rm result_oltp4

rm result_seqwr

rm result_seqrd

rm result_rndrd

rm result_seqrewr

rm result_prime1

rm result_prime4

for i in `seq 1 5`;

do

echo "PRIME"

sleep 5

sysbench --test=cpu --cpu-max-prime=20000 --num-threads=1 run >> result_prime1

done

sleep 5

cat result_prime1 |grep "total time:" > final_prime1

for i in `seq 1 5`;

do

echo "PRIME4"

sleep 5

sysbench --test=cpu --cpu-max-prime=20000 --num-threads=4 run >> result_prime4

done

sleep 5

cat result_prime4 |grep "total time:" > final_prime4

for i in `seq 1 5`;

do

echo "RNDRW"

sleep 5

sysbench --test=fileio --file-total-size=6G --file-test-mode=rndrw --max-time=300 run >> result_rndrw

done

sleep 5

cat result_rndrw |grep Requests| cut -d " " -f1 > final_rndrw

for i in `seq 1 5`;

do

echo "RNDWR"

sleep 5

sysbench --test=fileio --file-total-size=6G --file-test-mode=rndwr --max-time=300 run >> result_rndwr

done

sleep 5

cat result_rndwr |grep Requests| cut -d " " -f2 > final_rndwr

for i in `seq 1 5`;

do

echo "OLTP2"

sleep 5

sysbench --test=oltp --oltp-table-size=1000000 --mysql-db=test --mysql-user=root --mysql-password=test --num-threads=2 --max-time=60 run >> result_oltp2

done

sleep 5

cat result_oltp2 |grep transactions:|cut -d "(" -f 2|cut -d " " -f 1 > final_oltp2

for i in `seq 1 5`;

do

echo OLTP4

sleep 5

sysbench --test=oltp --oltp-table-size=1000000 --mysql-db=test --mysql-user=root --mysql-password=test --num-threads=4 --max-time=60 run >> result_oltp4

done

sleep 5

cat result_oltp4 |grep transactions:|cut -d "(" -f 2|cut -d " " -f 1 > final_oltp4

for i in `seq 1 5`;

do

echo SEQWR

sleep 5

sysbench --test=fileio --file-total-size=6G --file-test-mode=seqwr --max-time=300 run >> result_seqwr

done

sleep 5

cat result_seqwr |grep Requests| cut -d " " -f1 > final_seqwr

cat result_seqwr |grep transferred | cut -f2 -d "(" | cut -f1 -d "G" | cut -f1 -d "M" > final_seqwr_tp

for i in `seq 1 5`;

do

echo SEQRD

sleep 5

sysbench --test=fileio --file-total-size=6G --file-test-mode=seqrd --max-time=300 run >> result_seqrd

done

sleep 5

cat result_seqrd |grep Requests| cut -d " " -f1 > final_seqrd

cat result_seqrd |grep transferred | cut -f2 -d "(" | cut -f1 -d "G" | cut -f1 -d "M" > final_seqrd_tp

for i in `seq 1 5`;

do

echo RNDRD

sleep 5

sysbench --test=fileio --file-total-size=6G --file-test-mode=rndrd --max-time=300 run >> result_rndrd

done

sleep 5

cat result_rndrd |grep Requests|cut -d "R" -f1 > final_rndrd

for i in `seq 1 5`;

do

echo SEQREWR

sleep 5

sysbench --test=fileio --file-total-size=6G --file-test-mode=seqrewr --max-time=300 run >> result_seqrewr

done

Have you considered running SmartOS/Illumos/OmniTI directly with Zones and ZFS on their native platform and a full implementation of KVM? While bhyve is interested, it is not as battle tested as KVM, and Joyent uses this combination at a very large scale in their public cloud.

Hi, John. Yes, I looked at it when KVM was first ported to illumos and it didn’t work for me but maybe it’s time for a second look.

It certainly merits a second look. The initial port of KVM to Illumos/SmartOS was completed 4 years ago. Over the last four years, there have many changes to stabilize and improve the entire OS including KVM.

Bhyve now runs on Illumos/SmartOS/OmniOS since 2018 and is rather mature now. Setup almost the same as KVM the Bhyve runs in a zone just like KVM and has performance advantages. Not as fast as the Linux native zones which are almost bare metal speed but improved over KVM considerably. Unfortunately, the native Linux zones haven’t been updated in quite some time. Focus has been on native Illumos and Bhyve.

Good to know, thanks for the updated info!

Did you try running the bhyve guest OS installed on a zvol? Maybe that increases the performance of your guest OS as well. E.g.:

[…] -s 2,virtio-blk,/dev/zvol/virtualization/ubuntu […]

Hi, CurlyMo. No I didn’t, in my past testing zvol has always under-performed file based blocks so I didn’t even try. I can’t find it now but somebody had mentioned they tried running SQL Server on a ZVOL and it didn’t perform well. If I had unlimited time it’s something I probably would have tested.

Really cool stuff man! I’m a big FreeBSD/FreeNAS fan. I think the huge latency you’re seeing in VMWare is reading and writing data between the FreeNAS and the VMs over a network stack (NFS). It doesn’t matter how fast you make your data pipe, the IP network stack is a horrible protocol for raw data transfer, especially if NFS is using TCP (which would be pointless over a virtualized switch). Also, to add to your point about jails vs bhyve: if you just need an application or two from Linux, FreeBSD has a built in Linux compatability layer. Soooo, what do we need bhyve for again? The only thing I can think of is full protection for your base kernel from one of the contained processes causing a kernel panic and bringing all instances down. So really one must way up the performance hit of virtualization (very little but present on bhyve) vs the potential for a kernel panic (in my experience low).

Cheers,

Russ

Hi There

Just like.. my opinion but Type-1 vs Type-2. Type 1 is designed from the ground up to be nothing other than a hypervisor, its essentially an embedded os, vs Type-2 is a normal OS/Kernel with multiple uses which can also do Hypervising.

I think there are use cases for both and type is not always indicitive of performance but I think the primes test is a good example of how a Type 1 with an optimised scheduler might out perform a Type-2 that has other considerations.

True, FreeBSD is certainly more of a general purpose OS than a hypervisor, hopefully bhyve will become faster over time since it runs at the kernel level, but for now VMware is the clear winner on computing performance and it may remain that way for quite awhile.

Really Nice Comparison, I really like to point out that most Enterprise people , Companies and Admins keep propagating the Myth of “Enterprise Hardware” when someof teh most successful companies in the world use commodity hardware. For instance Google, Yahoo, and AWS and many others …

If they were al running so called “Enterprise Hardware and Software” prices would we 10X. I am glad the internet runs on OSS not “Enterprise grade” crap!!!

Awesome article, thnx for the great information! :)

Would love to see a more current test … maybe Bhyve from FreeBSD 12-current, ESXi 6.5 w/ FreeNAS 9.10 (RIP 10), etc.

Unrelated — is OmniOS really dead?!

This was really great. Thank you so much for publishing the results. Honestly yes, I agree with many people here… this test may or may not be useful to corporations, however think about this…. all of us typed in ESXi vs Bhyve in Google…. why? It is simply because we wanted to know the answer. Honestly, I wanted to see even less commercial stuff in here and see what totally retail hardware results would be like. So, we all understand that we may in-fact be comparing apples to oranges, but it doesn’t matter, I want to know the differences between this apple and this orange.

We push things until they break, then we fix it again, it is fun. Right?

You should probably get out more, as 15k RPM disks, flash cache, etc are all currently well supported under pretty much anything that can run ZFS. iXsystems has made quite a splash in the high performance SAN world with their all flash units. ZFS isn’t for “niche” use cases.

As to VMware vSphere/ESXi/vCenter being for massive datacenter deployments? It is not. Almost no one uses it for that. It occupies the middle ground in the Enterprise world. It does not scale or deploy like OpenStack based solutions, and that is why they’re in a pickle now. The small market is being taken by competitors, and the high end is as well. They are being squeezed.

Old news by now, but I’ve been running Joyent SmartOS, and I’ve been loving it.

Daniel Macht says:

December 7, 2015 at 7:16 am

The truth.

In a same conditions and workload, middle class Desktop’s MB lasts longеr than the respectively Server’s one . Vast production volumes give strong base for improve/develop.

Honestly I didn’t read past the first heading/chapter when I rushed here to comment because with that first part you made me see the whole thing from a totally different perspective–think out of the box. I want to thank you for that.

I’ve a very scattered brain so I needed to get it out as soon as possible or I’d forget. I guess now I can just send this to an iPad or something more comfortable and kick back. ????

Love to see this test repeated in 2019.

Also it would have been good if you could have used ZFS to export block storage ZVOLs rather than via NFS.

Thank you and … do it again with the current stuff if you have time! Awesome article! Thank you again!

it is proved with this benchmark and thanks to the participant comments containing very helpful and complementary information that freebsd itself (zfs native) can be HyperConverged solution (common home for storage and VMs) instead of giant VMware.

My interest is to utilize this in edge storage (OT historian,IT database) and iiot edge business. i don’t interest so much for IT data center scenario as IT people are generaly not open to open source side

Thank you for mind massage|:-)